Embedded Text Detection

textAutomatically detect if an image contains natural text or embedded text such as personal information or watermarks

Overview

The text detection API can help you determine if an image contains natural text or artificial (embedded) text.

The text detection will not act upon the actual text content. If you need to analyze the text content itself (such as to detect profanity, email addresses, phone number etc..) please use the Text Moderation for Image model.

Principles

The text detection does not use any image meta-data to determine if text is present in an image. The file extension, the meta-data or the name will not influence the result. The classification is made using only the pixel content of the image.

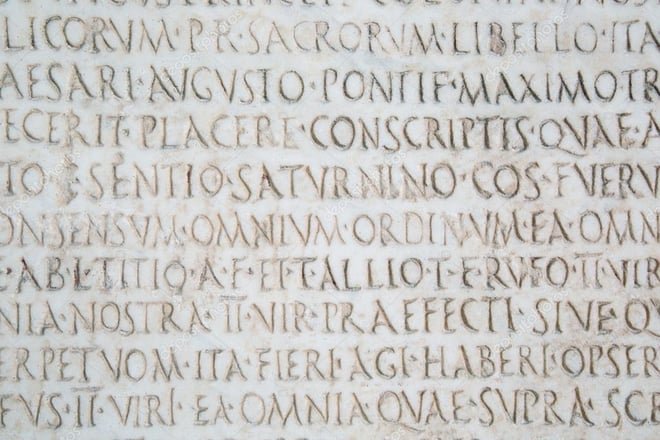

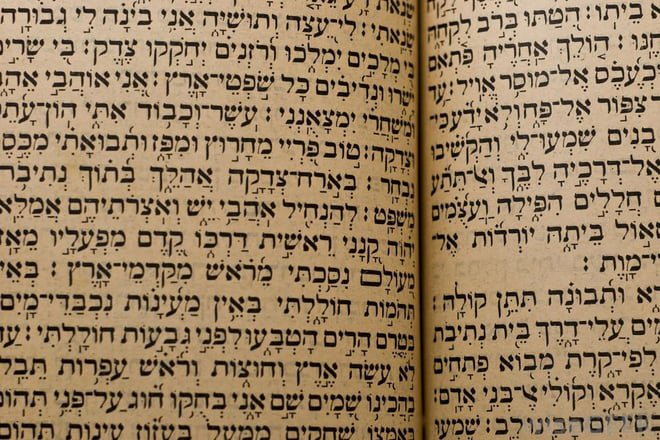

The model works for a host of different alphabets: latin, chinese, japanese, korean, arabic, hebrew, hindi...

Use-cases

- Require that users submit or upload images without artificial text

- Hide ads that have been artificially added

- Filter images containing personal information such as phone numbers, email adresses or usernames

- Detect watermarks

Limitations

- Texts smaller than 5% of the width or height of the image are not detected.

Natural text

The returned value is between 0 and 1, images with a natural text value closer to 1 will contain natural text while images with a natural text value closer to 0 will not contain natural text.

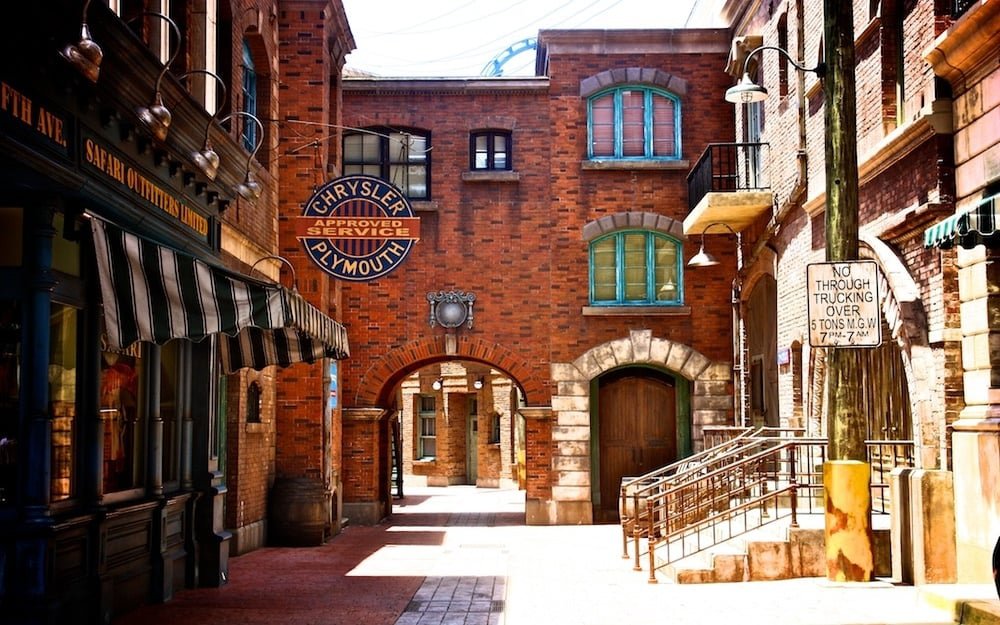

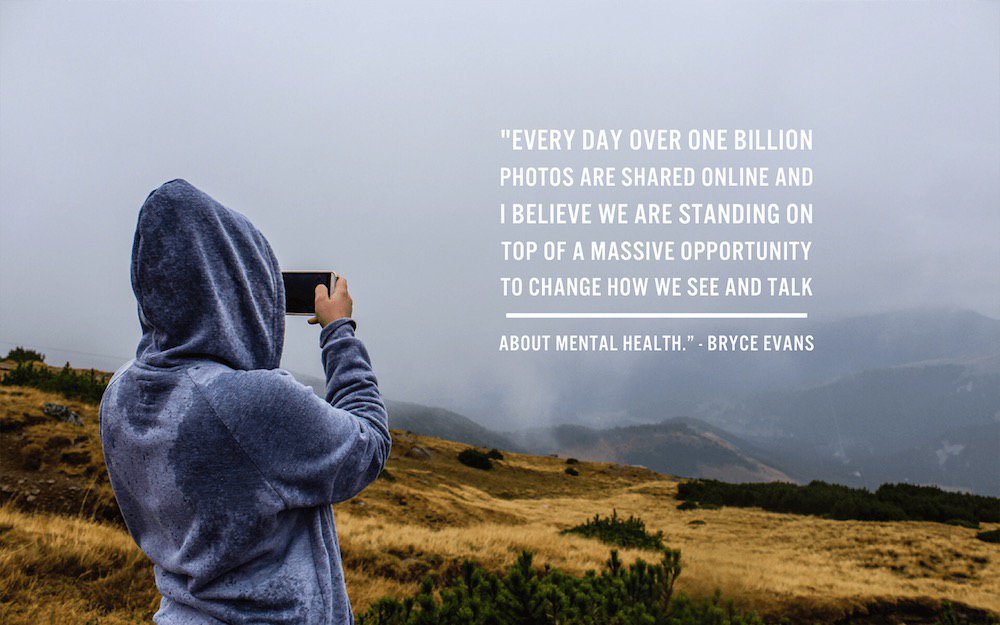

Artificial text

The returned value is between 0 and 1, images with an artificial text value closer to 1 will contain artificial text while images with an artificial text value closer to 0 will not contain artificial text.

Boxes

The values returned (x1, x2, y1, y2) help locate the texts present in the image.

For each box there you get a label that indicates the type of text (text-natural or text-artificial)

Use the model (images)

If you haven't already, create an account to get your own API keys.

Detect embedded and natural text in images

Let's say you want to moderate the following image:

You can either share a URL to the image, or upload the image file. Supported formats include JPEG, PNG, WebP, AVIF and more.

Option 1: Send image URL

Here's how to proceed if you choose to share the image URL:

curl -X GET -G 'https://api.sightengine.com/1.0/check.json' \

-d 'models=text' \

-d 'api_user={api_user}&api_secret={api_secret}' \

--data-urlencode 'url=https://sightengine.com/assets/img/examples/text1-1200.jpg'

# this example uses requests

import requests

import json

params = {

'url': 'https://sightengine.com/assets/img/examples/text1-1200.jpg',

'models': 'text',

'api_user': '{api_user}',

'api_secret': '{api_secret}'

}

r = requests.get('https://api.sightengine.com/1.0/check.json', params=params)

output = json.loads(r.text)

$params = array(

'url' => 'https://sightengine.com/assets/img/examples/text1-1200.jpg',

'models' => 'text',

'api_user' => '{api_user}',

'api_secret' => '{api_secret}',

);

// this example uses cURL

$ch = curl_init('https://api.sightengine.com/1.0/check.json?'.http_build_query($params));

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

$response = curl_exec($ch);

curl_close($ch);

$output = json_decode($response, true);

// this example uses axios

const axios = require('axios');

axios.get('https://api.sightengine.com/1.0/check.json', {

params: {

'url': 'https://sightengine.com/assets/img/examples/text1-1200.jpg',

'models': 'text',

'api_user': '{api_user}',

'api_secret': '{api_secret}',

}

})

.then(function (response) {

// on success: handle response

console.log(response.data);

})

.catch(function (error) {

// handle error

if (error.response) console.log(error.response.data);

else console.log(error.message);

});

See request parameter description

| Parameter | Type | Description |

| url | string | URL of the image to analyze |

| models | string | comma-separated list of models to apply |

| api_user | string | your API user id |

| api_secret | string | your API secret |

Option 2: Send image file

Here's how to proceed if you choose to upload the image file:

curl -X POST 'https://api.sightengine.com/1.0/check.json' \

-F 'media=@/path/to/image.jpg' \

-F 'models=text' \

-F 'api_user={api_user}' \

-F 'api_secret={api_secret}'

# this example uses requests

import requests

import json

params = {

'models': 'text',

'api_user': '{api_user}',

'api_secret': '{api_secret}'

}

files = {'media': open('/path/to/image.jpg', 'rb')}

r = requests.post('https://api.sightengine.com/1.0/check.json', files=files, data=params)

output = json.loads(r.text)

$params = array(

'media' => new CurlFile('/path/to/image.jpg'),

'models' => 'text',

'api_user' => '{api_user}',

'api_secret' => '{api_secret}',

);

// this example uses cURL

$ch = curl_init('https://api.sightengine.com/1.0/check.json');

curl_setopt($ch, CURLOPT_POST, true);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

curl_setopt($ch, CURLOPT_POSTFIELDS, $params);

$response = curl_exec($ch);

curl_close($ch);

$output = json_decode($response, true);

// this example uses axios and form-data

const axios = require('axios');

const FormData = require('form-data');

const fs = require('fs');

data = new FormData();

data.append('media', fs.createReadStream('/path/to/image.jpg'));

data.append('models', 'text');

data.append('api_user', '{api_user}');

data.append('api_secret', '{api_secret}');

axios({

method: 'post',

url:'https://api.sightengine.com/1.0/check.json',

data: data,

headers: data.getHeaders()

})

.then(function (response) {

// on success: handle response

console.log(response.data);

})

.catch(function (error) {

// handle error

if (error.response) console.log(error.response.data);

else console.log(error.message);

});

See request parameter description

| Parameter | Type | Description |

| media | file | image to analyze |

| models | string | comma-separated list of models to apply |

| api_user | string | your API user id |

| api_secret | string | your API secret |

API response

The API will then return a JSON response with the following structure:

{

"status": "success",

"request": {

"id": "req_22Qd0gUNmRH4GCYLvYtN6",

"timestamp": 1512483673.1405,

"operations": 1

},

"text": {

"has_artificial": 0.99932,

"has_natural": 0.19986,

"boxes": [

{

"x1": 0.18466,

"y1": 0.01757,

"x2": 0.8555,

"y2": 0.244,

"label": "text-artificial"

}

]

},

"media": {

"id": "med_22Qdfb5s97w8EDuY7Yfjp",

"uri": "https://sightengine.com/assets/img/examples/text1-1200.jpg"

}

}

Successful Response

Status code: 200, Content-Type: application/json| Field | Type | Description |

| status | string | status of the request, either "success" or "failure" |

| request | object | information about the processed request |

| request.id | string | unique identifier of the request |

| request.timestamp | float | timestamp of the request in Unix time |

| request.operations | integer | number of operations consumed by the request |

| media | object | information about the media analyzed |

| media.id | string | unique identifier of the media |

| media.uri | string | URI of the media analyzed: either the URL or the filename |

Error

Status codes: 4xx and 5xx. See how error responses are structured.Use the model (videos)

Detect embedded and natural text in videos

Option 1: Short video

Here's how to proceed to analyze a short video (less than 1 minute):

curl -X POST 'https://api.sightengine.com/1.0/video/check-sync.json' \

-F 'media=@/path/to/video.mp4' \

-F 'models=text' \

-F 'api_user={api_user}' \

-F 'api_secret={api_secret}'

# this example uses requests

import requests

import json

params = {

# specify the models you want to apply

'models': 'text',

'api_user': '{api_user}',

'api_secret': '{api_secret}'

}

files = {'media': open('/path/to/video.mp4', 'rb')}

r = requests.post('https://api.sightengine.com/1.0/video/check-sync.json', files=files, data=params)

output = json.loads(r.text)

$params = array(

'media' => new CurlFile('/path/to/video.mp4'),

// specify the models you want to apply

'models' => 'text',

'api_user' => '{api_user}',

'api_secret' => '{api_secret}',

);

// this example uses cURL

$ch = curl_init('https://api.sightengine.com/1.0/video/check-sync.json');

curl_setopt($ch, CURLOPT_POST, true);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

curl_setopt($ch, CURLOPT_POSTFIELDS, $params);

$response = curl_exec($ch);

curl_close($ch);

$output = json_decode($response, true);

// this example uses axios and form-data

const axios = require('axios');

const FormData = require('form-data');

const fs = require('fs');

data = new FormData();

data.append('media', fs.createReadStream('/path/to/video.mp4'));

// specify the models you want to apply

data.append('models', 'text');

data.append('api_user', '{api_user}');

data.append('api_secret', '{api_secret}');

axios({

method: 'post',

url:'https://api.sightengine.com/1.0/video/check-sync.json',

data: data,

headers: data.getHeaders()

})

.then(function (response) {

// on success: handle response

console.log(response.data);

})

.catch(function (error) {

// handle error

if (error.response) console.log(error.response.data);

else console.log(error.message);

});

See request parameter description

| Parameter | Type | Description |

| media | file | image to analyze |

| models | string | comma-separated list of models to apply |

| interval | float | frame interval in seconds, out of 0.5, 1, 2, 3, 4, 5 (optional) |

| api_user | string | your API user id |

| api_secret | string | your API secret |

Option 2: Long video

Here's how to proceed to analyze a long video. Note that if the video file is very large, you might first need to upload it through the Upload API.

curl -X POST 'https://api.sightengine.com/1.0/video/check.json' \

-F 'media=@/path/to/video.mp4' \

-F 'models=text' \

-F 'callback_url=https://yourcallback/path' \

-F 'api_user={api_user}' \

-F 'api_secret={api_secret}'

# this example uses requests

import requests

import json

params = {

# specify the models you want to apply

'models': 'text',

# specify where you want to receive result callbacks

'callback_url': 'https://yourcallback/path',

'api_user': '{api_user}',

'api_secret': '{api_secret}'

}

files = {'media': open('/path/to/video.mp4', 'rb')}

r = requests.post('https://api.sightengine.com/1.0/video/check.json', files=files, data=params)

output = json.loads(r.text)

$params = array(

'media' => new CurlFile('/path/to/video.mp4'),

// specify the models you want to apply

'models' => 'text',

// specify where you want to receive result callbacks

'callback_url' => 'https://yourcallback/path',

'api_user' => '{api_user}',

'api_secret' => '{api_secret}',

);

// this example uses cURL

$ch = curl_init('https://api.sightengine.com/1.0/video/check.json');

curl_setopt($ch, CURLOPT_POST, true);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

curl_setopt($ch, CURLOPT_POSTFIELDS, $params);

$response = curl_exec($ch);

curl_close($ch);

$output = json_decode($response, true);

// this example uses axios and form-data

const axios = require('axios');

const FormData = require('form-data');

const fs = require('fs');

data = new FormData();

data.append('media', fs.createReadStream('/path/to/video.mp4'));

// specify the models you want to apply

data.append('models', 'text');

// specify where you want to receive result callbacks

data.append('callback_url', 'https://yourcallback/path');

data.append('api_user', '{api_user}');

data.append('api_secret', '{api_secret}');

axios({

method: 'post',

url:'https://api.sightengine.com/1.0/video/check.json',

data: data,

headers: data.getHeaders()

})

.then(function (response) {

// on success: handle response

console.log(response.data);

})

.catch(function (error) {

// handle error

if (error.response) console.log(error.response.data);

else console.log(error.message);

});

See request parameter description

| Parameter | Type | Description |

| media | file | image to analyze |

| callback_url | string | callback URL to receive moderation updates (optional) |

| models | string | comma-separated list of models to apply |

| interval | float | frame interval in seconds, out of 0.5, 1, 2, 3, 4, 5 (optional) |

| api_user | string | your API user id |

| api_secret | string | your API secret |

Option 3: Live-stream

Here's how to proceed to analyze a live-stream:

curl -X GET -G 'https://api.sightengine.com/1.0/video/check.json' \

--data-urlencode 'stream_url=https://domain.tld/path/video.m3u8' \

-d 'models=text' \

-d 'callback_url=https://your.callback.url/path' \

-d 'api_user={api_user}' \

-d 'api_secret={api_secret}'

# this example uses requests

import requests

import json

params = {

'stream_url': 'https://domain.tld/path/video.m3u8',

# specify the models you want to apply

'models': 'text',

# specify where you want to receive result callbacks

'callback_url': 'https://your.callback.url/path',

'api_user': '{api_user}',

'api_secret': '{api_secret}'

}

r = requests.post('https://api.sightengine.com/1.0/video/check.json', data=params)

output = json.loads(r.text)

$params = array(

'stream_url' => 'https://domain.tld/path/video.m3u8',

// specify the models you want to apply

'models' => 'text',

// specify where you want to receive result callbacks

'callback_url' => 'https://your.callback.url/path',

'api_user' => '{api_user}',

'api_secret' => '{api_secret}',

);

// this example uses cURL

$ch = curl_init('https://api.sightengine.com/1.0/video/check.json');

curl_setopt($ch, CURLOPT_POST, true);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

curl_setopt($ch, CURLOPT_POSTFIELDS, $params);

$response = curl_exec($ch);

curl_close($ch);

$output = json_decode($response, true);

// this example uses axios and form-data

const axios = require('axios');

const FormData = require('form-data');

const fs = require('fs');

data = new FormData();

data.append('stream_url', 'https://domain.tld/path/video.m3u8');

// specify the models you want to apply

data.append('models', 'text');

// specify where you want to receive result callbacks

data.append('callback_url', 'https://your.callback.url/path');

data.append('api_user', '{api_user}');

data.append('api_secret', '{api_secret}');

axios({

method: 'post',

url:'https://api.sightengine.com/1.0/video/check.json',

data: data,

headers: data.getHeaders()

})

.then(function (response) {

// on success: handle response

console.log(response.data);

})

.catch(function (error) {

// handle error

if (error.response) console.log(error.response.data);

else console.log(error.message);

});

See request parameter description

| Parameter | Type | Description |

| stream_url | string | URL of the video stream |

| callback_url | string | callback URL to receive moderation updates (optional) |

| models | string | comma-separated list of models to apply |

| interval | float | frame interval in seconds, out of 0.5, 1, 2, 3, 4, 5 (optional) |

| api_user | string | your API user id |

| api_secret | string | your API secret |

Moderation result

The Moderation result will be provided either directly in the request response (for sync calls, see below) or through the callback URL your provided (for async calls).

Here is the structure of the JSON response with moderation results for each analyzed frame under the data.frames array:

{

"status": "success",

"request": {

"id": "req_gmgHNy8oP6nvXYaJVLq9n",

"timestamp": 1717159864.348989,

"operations": 21

},

"data": {

"frames": [

{

"info": {

"id": "med_gmgHcUOwe41rWmqwPhVNU_1",

"position": 0

},

"text": {

"has_artificial": 0.01,

"has_natural": 0.02,

"boxes": []

}

},

...

]

},

"media": {

"id": "med_gmgHcUOwe41rWmqwPhVNU",

"uri": "yourfile.mp4"

},

}

You can use the classes under the text object to determine the text content of the video.